Should some conversations be suppressed?

Are there ideas which could prove so incendiary, and so provocative, that it would be better to shut them down?

Should some concepts be permanently locked into a Pandora’s box, lest they fly off and cause too much chaos in the world?

As an example, consider this oft-told story from the 1850s, about the dangers of spreading the idea of that humans had evolved from apes:

It is said that when the theory of evolution was first announced it was received by the wife of the Canon of Worcester Cathedral with the remark, “Descended from the apes! My dear, we will hope it is not true. But if it is, let us pray that it may not become generally known.”

More recently, there’s been a growing worry about spreading the idea that AGI (Artificial General Intelligence) could become an apocalyptic menace. The worry is that any discussion of that idea could lead to public hostility against the whole field of AGI. Governments might be panicked into shutting down these lines of research. And self-appointed militant defenders of the status quo might take up arms against AGI researchers. Perhaps, therefore, we should avoid any public mention of potential downsides of AGI. Perhaps we should pray that these downsides don’t become generally known.

The theme of armed resistance against AGI researchers features in several Hollywood blockbusters. In Transcendence, a radical anti-tech group named “RIFT” track down and shoot the AGI researcher played by actor Johnny Depp. RIFT proclaims “revolutionary independence from technology”.

The theme of armed resistance against AGI researchers features in several Hollywood blockbusters. In Transcendence, a radical anti-tech group named “RIFT” track down and shoot the AGI researcher played by actor Johnny Depp. RIFT proclaims “revolutionary independence from technology”.

As blogger Calum Chace has noted, just because something happens in a Hollywood movie, it doesn’t mean it can’t happen in real life too.

In real life, “Unabomber” Ted Kaczinski was so fearful about the future destructive potential of technology that he sent 16 bombs to targets such as universities and airlines over the period 1978 to 1995, killing three people and injuring 23. Kaczinski spelt out his views in a 35,000 word essay Industrial Society and Its Future.

Kaczinki’s essay stated that “the Industrial Revolution and its consequences have been a disaster for the human race”, defended his series of bombings as an extreme but necessary step to attract attention to how modern technology was eroding human freedom, and called for a “revolution against technology”.

Anticipating the next Unabombers

The Unabomber may have been an extreme case, but he’s by no means alone. Journalist Jamie Bartlett takes up the story in a chilling Daily Telegraph article “As technology swamps our lives, the next Unabombers are waiting for their moment”,

The Unabomber may have been an extreme case, but he’s by no means alone. Journalist Jamie Bartlett takes up the story in a chilling Daily Telegraph article “As technology swamps our lives, the next Unabombers are waiting for their moment”,

In 2011 a new Mexican group called the Individualists Tending toward the Wild were founded with the objective “to injure or kill scientists and researchers (by the means of whatever violent act) who ensure the Technoindustrial System continues its course”. In 2011, they detonated a bomb at a prominent nano-technology research centre in Monterrey.

Individualists Tending toward the Wild have published their own manifesto, which includes the following warning:

We employ direct attacks to damage both physically and psychologically, NOT ONLY experts in nanotechnology, but also scholars in biotechnology, physics, neuroscience, genetic engineering, communication science, computing, robotics, etc. because we reject technology and civilisation, we reject the reality that they are imposing with ALL their advanced science.

Before going any further, let’s agree that we don’t want to inflame the passions of would-be Unabombers, RIFTs, or ITWs. But that shouldn’t lead to whole conversations being shut down. It’s the same with criticism of religion. We know that, when we criticise various religious doctrines, it may inflame jihadist zeal. How dare you offend our holy book, and dishonour our exalted prophet, the jihadists thunder, when they cannot bear to hear our criticisms. But that shouldn’t lead us to cowed silence – especially when we’re aware of ways in which religious doctrines are damaging individuals and societies (by opposition to vaccinations or blood transfusions, or by denying female education).

Instead of silence (avoiding the topic altogether), what these worries should lead us to is a more responsible, inclusive, measured conversation. That applies for the drawbacks of religion. And it applies, too, for the potential drawbacks of AGI.

Engaging conversation

The conversation I envisage will still have its share of poetic effect – with risks and opportunities temporarily painted more colourfully than a fully sober evaluation warrants. If we want to engage people in conversation, we sometimes need to make dramatic gestures. To squeeze a message into a 140 character-long tweet, we sometimes have to trim the corners of proper spelling and punctuation. Similarly, to make people stop in their tracks, and start to pay attention to a topic that deserves fuller study, some artistic license may be appropriate. But only if that artistry is quickly backed up with a fuller, more dispassionate, balanced analysis.

What I’ve described here is a two-phase model for spreading ideas about disruptive technologies such as AGI:

- Key topics can be introduced, in vivid ways, using larger-than-life characters in absorbing narratives, whether in Hollywood or in novels

- The topics can then be rounded out, in multiple shades of grey, via film and book reviews, blog posts, magazine articles, and so on.

Since I perceive both the potential upsides and the potential downsides of AGI as being enormous, I want to enlarge the pool of people who are thinking hard about these topics. I certainly don’t want the resulting discussion to slide off to an extreme point of view which would cause the whole field of AGI to be suspended, or which would encourage active sabotage and armed resistance against it. But nor do I want the discussion to wither away, in a way that would increase the likelihood of adverse unintended outcomes from aberrant AGI.

Welcoming Pandora’s Brain

That’s why I welcome the recent publication of the novel “Pandora’s Brain”, by the above-mentioned blogger Calum Chace. Pandora’s Brain is a science and philosophy thriller that transforms a series of philosophical concepts into vivid life-and-death conundrums that befall the characters in the story. Here’s how another science novellist, William Hertling, describes the book:

That’s why I welcome the recent publication of the novel “Pandora’s Brain”, by the above-mentioned blogger Calum Chace. Pandora’s Brain is a science and philosophy thriller that transforms a series of philosophical concepts into vivid life-and-death conundrums that befall the characters in the story. Here’s how another science novellist, William Hertling, describes the book:

Pandora’s Brain is a tour de force that neatly explains the key concepts behind the likely future of artificial intelligence in the context of a thriller novel. Ambitious and well executed, it will appeal to a broad range of readers.

In the same way that Suarez’s Daemon and Naam’s Nexus leaped onto the scene, redefining what it meant to write about technology, Pandora’s Brain will do the same for artificial intelligence.

Mind uploading? Check. Human equivalent AI? Check. Hard takeoff singularity? Check. Strap in, this is one heck of a ride.

Mainly set in the present day, the plot unfolds in an environment that seems reassuringly familiar, but which is overshadowed by a combination of both menace and promise. Carefully crafted, and absorbing from its very start, the book held my rapt attention throughout a series of surprise twists, as various personalities react in different ways to a growing awareness of that menace and promise.

In short, I found Pandora’s Brain to be a captivating tale of developments in artificial intelligence that could, conceivably, be just around the corner. The imminent possibility of these breakthroughs cause characters in the book to re-evaluate many of their cherished beliefs, and will lead most readers to several “OMG” realisations about their own philosophies of life. Apple carts that are upended in the processes are unlikely ever to be righted again. Once the ideas have escaped from the pages of this Pandora’s box of a book, there’s no going back to a state of innocence.

But as I said, not everyone is enthralled by the prospect of wider attention to the “menace” side of AGI. Each new novel or film in this space has the potential of stirring up a negative backlash against AGI researchers, potentially preventing them from doing the work that would deliver the powerful “promise” side of AGI.

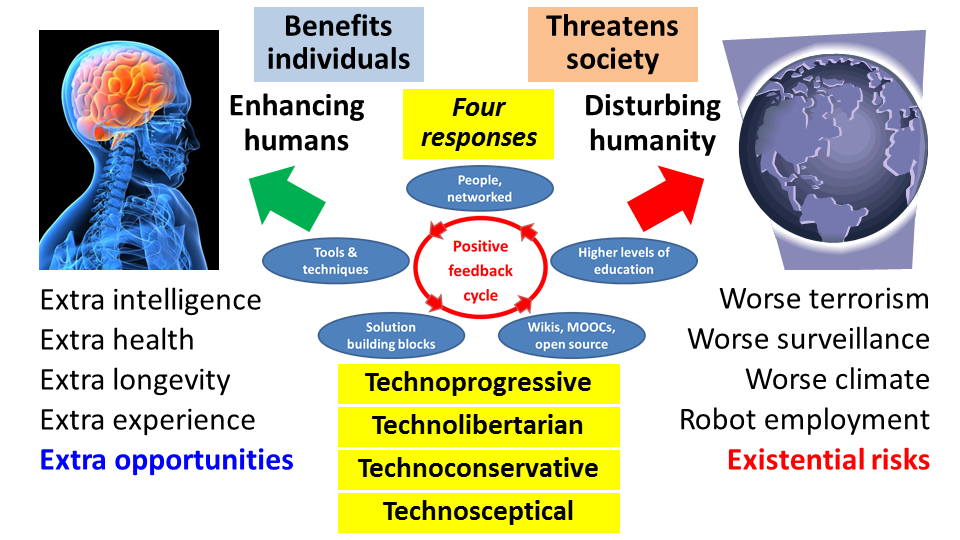

The dual potential of AGI

The tremendous dual potential of AGI was emphasised in an open letter published in January by the Future of Life Institute:

The tremendous dual potential of AGI was emphasised in an open letter published in January by the Future of Life Institute:

There is now a broad consensus that AI research is progressing steadily, and that its impact on society is likely to increase. The potential benefits are huge, since everything that civilization has to offer is a product of human intelligence; we cannot predict what we might achieve when this intelligence is magnified by the tools AI may provide, but the eradication of disease and poverty are not unfathomable. Because of the great potential of AI, it is important to research how to reap its benefits while avoiding potential pitfalls.

“The eradication of disease and poverty” – these would be wonderful outcomes from the project to create AGI. But the lead authors of that open letter, including physicist Stephen Hawking and AI professor Stuart Russell, sounded their own warning note:

Success in creating AI would be the biggest event in human history. Unfortunately, it might also be the last, unless we learn how to avoid the risks. In the near term, world militaries are considering autonomous-weapon systems that can choose and eliminate targets; the UN and Human Rights Watch have advocated a treaty banning such weapons. In the medium term, as emphasised by Erik Brynjolfsson and Andrew McAfee in The Second Machine Age, AI may transform our economy to bring both great wealth and great dislocation…

One can imagine such technology outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders, and developing weapons we cannot even understand. Whereas the short-term impact of AI depends on who controls it, the long-term impact depends on whether it can be controlled at all.

They followed up with this zinger:

So, facing possible futures of incalculable benefits and risks, the experts are surely doing everything possible to ensure the best outcome, right? Wrong… Although we are facing potentially the best or worst thing to happen to humanity in history, little serious research is devoted to these issues outside non-profit institutes… All of us should ask ourselves what we can do now to improve the chances of reaping the benefits and avoiding the risks.

Criticisms

Critics give a number of reasons why they see these fears as overblown. To start with, they argue that the people raising the alarm – Stephen Hawking, serial entrepreneur Elon Musk, Oxford University philosophy professor Nick Bostrom, and so on – lack their own expertise in AGI. They may be experts in black hole physics (Hawking), or in electric cars (Musk), or in academic philosophy (Bostrom), but that gives them no special insights into the likely course of development of AGI. Therefore we shouldn’t pay particular attention to what they say.

A second criticism is that it’s premature to worry about the advent of AGI. AGI is still situated far into the future. In this view, as stated by Demis Hassabis, founder of DeepMind,

We’re many, many decades away from anything, any kind of technology that we need to worry about.

The third criticism is that it will be relatively simple to stop AGI causing any harm to humans. AGI will be a tool to humans, under human control, rather than having its own autonomy. This view is represented by this tweet by science populariser Neil deGrasse Tyson:

Seems to me, as long as we don’t program emotions into Robots, there’s no reason to fear them taking over the world.

I hear all these criticisms, but they’re by no means the end of the discussion. They’re no reason to terminate the discussion about AGI risks. That’s the argument I’m going to make in the remainder of this blogpost.

By the way, you’ll find all these of these criticisms mirrored in the course of the novel Pandora’s Brain. That’s another reason I recommend that people should read that book. It manages to bring a great deal of serious arguments to the table, in the course of entertaining (and sometimes frightening) the reader.

Answering the criticisms: personnel

Elon Musk, one of the people who have raised the alarm about AGI risks, lacks any PhD in Artificial Intelligence to his name. It’s the same with Stephen Hawking and with Nick Bostrom. On the other hand, others who are raising the alarm do have relevant qualifications.

Consider as just one example Stuart Russell, who is a computer-science professor at the University of California, Berkeley and co-author of the 1152-page best-selling text-book “Artificial Intelligence: A Modern Approach”. This book is described as follows:

Consider as just one example Stuart Russell, who is a computer-science professor at the University of California, Berkeley and co-author of the 1152-page best-selling text-book “Artificial Intelligence: A Modern Approach”. This book is described as follows:

Artificial Intelligence: A Modern Approach, 3rd edition offers the most comprehensive, up-to-date introduction to the theory and practice of artificial intelligence. Number one in its field, this textbook is ideal for one or two-semester, undergraduate or graduate-level courses in Artificial Intelligence.

Moreover, other people raising the alarm include some the giants of the modern software industry:

Wozniak put his worries as follows – in an interview for the Australian Financial Review:

“Computers are going to take over from humans, no question,” Mr Wozniak said.

He said he had long dismissed the ideas of writers like Raymond Kurzweil, who have warned that rapid increases in technology will mean machine intelligence will outstrip human understanding or capability within the next 30 years. However Mr Wozniak said he had come to recognise that the predictions were coming true, and that computing that perfectly mimicked or attained human consciousness would become a dangerous reality.

“Like people including Stephen Hawking and Elon Musk have predicted, I agree that the future is scary and very bad for people. If we build these devices to take care of everything for us, eventually they’ll think faster than us and they’ll get rid of the slow humans to run companies more efficiently,” Mr Wozniak said.

“Will we be the gods? Will we be the family pets? Or will we be ants that get stepped on? I don’t know about that…

And here’s what Bill Gates said on the matter, in an “Ask Me Anything” session on Reddit:

I am in the camp that is concerned about super intelligence. First the machines will do a lot of jobs for us and not be super intelligent. That should be positive if we manage it well. A few decades after that though the intelligence is strong enough to be a concern. I agree with Elon Musk and some others on this and don’t understand why some people are not concerned.

Returning to Elon Musk, even his critics must concede he has shown remarkable ability to make new contributions in areas of technology outside his original specialities. Witness his track record with PayPal (a disruption in finance), SpaceX (a disruption in rockets), and Tesla Motors (a disruption in electric batteries and electric cars). And that’s even before considering his contributions at SolarCity and Hyperloop.

Incidentally, Musk puts his money where his mouth is. He has donated $10 million to the Future of Life Institute to run a global research program aimed at keeping AI beneficial to humanity.

I sum this up as follows: the people raising the alarm in recent months about the risks of AGI have impressive credentials. On occasion, their sound-bites may cut corners in logic, but they collectively back up these sound-bites with lengthy books and articles that deserve serious consideration.

Answering the criticisms: timescales

I have three answers to the comment about timescales. The first is to point out that Demis Hassabis himself sees no reason for any complacency, on account of the potential for AGI to require “many decades” before it becomes a threat. Here’s the fuller version of the quote given earlier:

We’re many, many decades away from anything, any kind of technology that we need to worry about. But it’s good to start the conversation now and be aware of as with any new powerful technology it can be used for good or bad.

(Emphasis added.)

Second, the community of people working on AGI has mixed views on timescales. The Future of Life Institute ran a panel discussion in Puerto Rico in January that addressed (among many other topics) “Creating human-level AI: how and when”. Dileep George of Vicarious gave the following answer about timescales in his slides (PDF):

Will we solve the fundamental research problems in N years?

N <= 5: No way

5 < N <= 10: Small possibility

10 < N <= 20: > 50%.

In other words, in his view, there’s a greater than 50% chance that artificial general human-level intelligence will be solved within 20 years.

The answers from the other panellists aren’t publicly recorded (the event was held under Chatham House rules). However, Nick Bostrom has conducted several surveys among different communities of AI researchers. The results are included in his book Superintelligence: Paths, Dangers, Strategies. The communities surveyed included:

The answers from the other panellists aren’t publicly recorded (the event was held under Chatham House rules). However, Nick Bostrom has conducted several surveys among different communities of AI researchers. The results are included in his book Superintelligence: Paths, Dangers, Strategies. The communities surveyed included:

- Participants at an international conference: Philosophy & Theory of AI

- Participants at another international conference: Artificial General Intelligence

- The Greek Association for Artificial Intelligence

- The top 100 cited authors in AI.

In each case, participants were asked for the dates when they were 90% sure human-level AGI would be achieved, 50% sure, and 10% sure. The average answers were:

- 90% likely human-level AGI is achieved: 2075

- 50% likely: 2040

- 10% likely: 2022.

If we respect what this survey says, there’s at least a 10% chance of breakthrough developments within the next ten years. Therefore it’s no real surprise that Hassabis says

It’s good to start the conversation now and be aware of as with any new powerful technology it can be used for good or bad.

Third, I’ll give my own reasons for why progress in AGI might speed up:

- Computer hardware is likely to continue to improve – perhaps utilising breakthroughs in quantum computing

- Clever software improvements can increase algorithm performance even more than hardware improvements

- Studies of the human brain, which are yielding knowledge faster than ever before, can be translated into “neuromorphic computing”

- More people are entering and studying AI than ever before, in part due to MOOCs, such as that from Stanford University

- There are more software components, databases, tools, and methods available for innovative recombination

- AI methods are being accelerated for use in games, financial trading, malware detection (and in malware itself), and in many other industries

- There could be one or more “Sputnik moments” causing society to buckle up its motivation to more fully support AGI research (especially when AGI starts producing big benefits in healthcare diagnosis).

Answering the critics: control

I’ve left the hardest question to last. Could there be relatively straightforward ways to keep AGI under control? For example, would it suffice to avoid giving AGI intentions, or emotions, or autonomy?

For example, physics professor and science populariser Michio Kaku speculates as follows:

No one knows when a robot will approach human intelligence, but I suspect it will be late in the 21st century. Will they be dangerous? Possibly. So I suggest we put a chip in their brain to shut them off if they have murderous thoughts.

And as mentioned earlier, Neil deGrasse Tyson proposes,

As long as we don’t program emotions into Robots, there’s no reason to fear them taking over the world.

Nick Bostrom devoted a considerable portion of his book to this “Control problem”. Here are some reasons I think we need to continue to be extremely careful:

- Emotions and intentions might arise unexpectedly, as unplanned side-effects of other aspects of intelligence that are built into software

- All complex software tends to have bugs; it may fail to operate in the way that we instruct it

- The AGI software will encounter many situations outside of those we explicitly anticipated; the response of the software in these novel situations may be to do “what we asked it to do” but not what we would have wished it to do

- Complex software may be vulnerable to having its functionality altered, either by external hacking, or by well-intentioned but ill-executed self-modification

- Software may find ways to keep its inner plans hidden – it may have “murderous thoughts” which it prevents external observers from noticing

- More generally, black-box evolution methods may result in software that works very well in a large number of circumstances, but which will go disastrously wrong in new circumstances, all without the actual algorithms being externally understood

- Powerful software can have unplanned adverse effects, even without any consciousness or emotion being present; consider battlefield drones, infrastructure management software, financial investment software, and nuclear missile detection software

- Software may be designed to be able to manipulate humans, initially for purposes akin to advertising, or to keep law and order, but these powers may evolve in ways that have worse side effects.

A new Columbus?

A number of the above thoughts started forming in my mind as I attended the Singularity University Summit in Seville, Spain, a few weeks ago. Seville, I discovered during my visit, was where Christopher Columbus persuaded King Ferdinand and Queen Isabella of Spain to fund his proposed voyage westwards in search of a new route to the Indies. It turns out that Columbus succeeded in finding the new continent of America only because he was hopelessly wrong in his calculation of the size of the earth.

A number of the above thoughts started forming in my mind as I attended the Singularity University Summit in Seville, Spain, a few weeks ago. Seville, I discovered during my visit, was where Christopher Columbus persuaded King Ferdinand and Queen Isabella of Spain to fund his proposed voyage westwards in search of a new route to the Indies. It turns out that Columbus succeeded in finding the new continent of America only because he was hopelessly wrong in his calculation of the size of the earth.

From the time of the ancient Greeks, learned observers had known that the earth was a sphere of roughly 40 thousand kilometres in circumference. Due to a combination of mistakes, Columbus calculated that the Canary Islands (which he had often visited) were located only about 4,440 km from Japan; in reality, they are about 19,000 km apart.

Most of the countries where Columbus pitched the idea of his westward journey turned him down – believing instead the figures for the larger circumference of the earth. Perhaps spurred on by competition with the neighbouring Portuguese (who had, just a few years previously, successfully navigated to the Indian ocean around the tip of Africa), the Spanish king and queen agreed to support his adventure. Fortunately for Columbus, a large continent existed en route to Asia, allowing him landfall. And the rest is history. That history included the near genocide of the native inhabitants by conquerors from Europe. Transmission of European diseases compounded the misery.

It may be the same with AGI. Rational observers may have ample justification in thinking that true AGI is located many decades in the future. But this fact does not deter a multitude of modern-day AGI explorers from setting out, Columbus-like, in search of some dramatic breakthroughs. And who knows what intermediate forms of AI might be discovered, unexpectedly?

It all adds to the argument for keeping our wits fully about us. We should use every means at our disposal to think through options in advance. This includes well-grounded fictional explorations, such as Pandora’s Brain, as well as the novels by William Hertling. And it also includes the kinds of research being undertaken by the Future of Life Institute and associated non-profit organisations, such as CSER in Cambridge, FHI in Oxford, and MIRI (the Machine Intelligence Research Institute).

Let’s keep this conversation open – it’s far too important to try to shut it down.

Footnote: Vacancies at the Centre for the Study of Existential Risk

I see that the Cambridge University CSER (Centre for the Study of Existential Risk) have four vacancies for Research Associates. From the job posting:

Up to four full-time postdoctoral research associates to work on the project Towards a Science of Extreme Technological Risk (ETR) within the Centre for the Study of Existential Risk (CSER).

CSER’s research focuses on the identification, management and mitigation of possible extreme risks associated with future technological advances. We are currently based within the University’s Centre for Research in the Arts, Social Sciences and Humanities (CRASSH). Our goal is to bring together some of the best minds from academia, industry and the policy world to tackle the challenges of ensuring that powerful new technologies are safe and beneficial. We focus especially on under-studied high-impact risks – risks that might result in a global catastrophe, or even threaten human extinction, even if only with low probability.

The closing date for applications is 24th April. If you’re interested, don’t delay!