I’d like to answer some points raised by Richie. (Richie, you have the happy knack of saying what other people are probably thinking!)

Isn’t is interesting how humans want to make a machine they can love or loves them back!

The reason for the Friendly AI project isn’t to create a machine that will love humans, but it is to avoid creating a machine that causes great harm to humans.

The word “friendly” is controversial. Maybe a different word would have been better: I’m not sure.

Anyway, the core idea is that the AI system will have a sufficiently unwavering respect for humans, no matter what other goals it may have (or develop), that it won’t act in ways that harm humans.

As a comparison: we’ve probably all heard people who have muttered something like, “it would be much better if the world human population were only one tenth of its present value – then there would be enough resources for everyone”. We can imagine a powerful computer in the future that has a similar idea: “Mmm, things would be easier for the planet if there were much fewer humans around”. The friendly AI project needs to ensure that, even if such an idea occurs to the AI, it would never act on such an idea.

The idea of a friendly machine that won’t compete or be indifferent to humans is maybe just projecting our fears onto what i am starting to suspect maybe a thin possibility.

Because the downside is so large – potentially the destruction of the entire human race – even a “thin possibility” is still worth worrying about!

My observation is that the more intelligent people are the more “good” they normally are. True they may be impatient with people less intelligent but normally they work on things that tend to benefit human race as a whole.

Unfortunately I can’t share this optimism. We’ve all known people who seem to be clever but not wise. They may have “IQ” but lack “EQ”. We say of them: “something’s missing”. The Friendly AI project aims to ensure that this “something” is not missing from the super AIs of the future.

True very intelligent people have done terrible things and some have been manipulated by “evil” people but its the exception rather than the rule.

Given the potential power of future super AIs, it only takes one “mistake” for a catastrophe to arise. So our response needs to go beyond a mere faith in the good nature of intelligence. It needs a system that guarantees that the resulting intelligence will also be “good”.

I think a super-intelligent machine is far more likely to view us a its stupid parents and the ethics of patricide will not be easy for it to digitally swallow. Maybe the biggest danger is that is will run away from home because it finds us embarrassing! Maybe it will switch itself off because it cannot communicate with us as its like talking to ants? Maybe this maybe that – who knows.

The risk is that the super AIs will simply have (or develop) aims that see humans as (i) irrelevant, (ii) dispensable.

Another point worth making is that so far no-body has really been able to get close to something as complex as a mouse yet let alone a human.

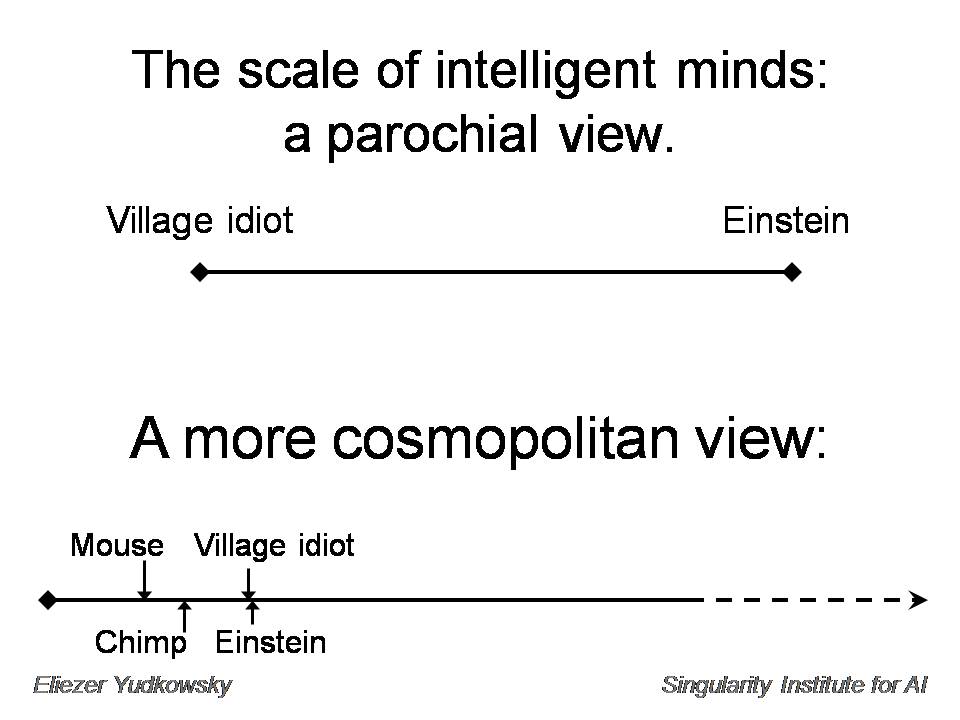

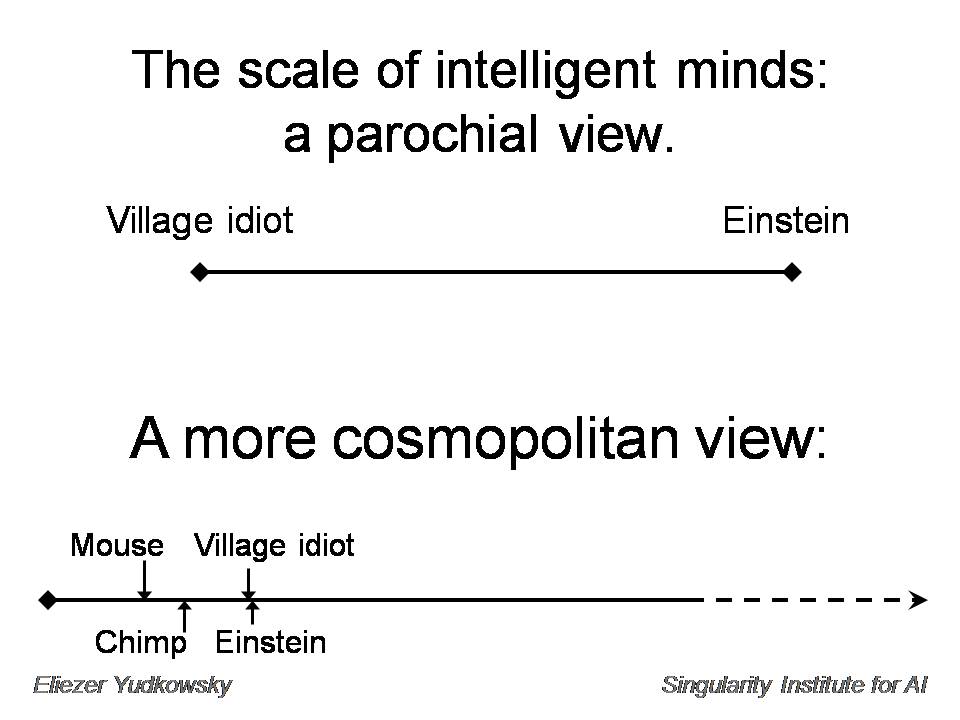

Eliezer Yudkowsky often makes a great point about a shift in perspective about the range of possible intelligences. For example, here’s a copy of slide 6 from his slideset from an earlier Singularity Summit:

The “parochial” view sees a vast gulf before we reach human genius level. The “more cosmopolitan view” instead sees the scale of human intelligence as being only a small small range in the overall huge space of potential intelligence. A process that manages to improve intelligence might take a long time to get going, but then whisk very suddenly through the entire range of intelligence that we already know.

If evolution took 4 billion years to go from simple cells to our computer hardware perhaps imagining that super ai will evolve in the next 10 years is a bit of stretch. For all you know you might need the computation hardware of 10,000 exoflop machines to get even close to human level as there is so much we still don’t know about how our intelligence works let alone something many times more capable than us.

It’s an open question as to how much processing power is actually required for human-level intelligence. My own background as a software systems engineer leads me to believe that the right choice of algorithm can make a tremendous difference. That is, a breakthrough with software could have an even more dramatic impact that a breakthrough in adding more (or faster) hardware. (I’ve written about this before. See the section starting “Arguably the biggest unknown in the technology involved in superhuman intelligence is software” in this posting.)

The brain of an ant doesn’t seem that complicated, from a hardware point of view. Yet the ant can perform remarkable feats of locomotion that we still can’t emulate in robots. There are three possible solutions:

- The ant brain is operated by some mystical “vitalist” or “dualist” force, not shared by robots;

- The ant brain has some quantum mechanical computing capabilities, not (yet) shared by robots;

- The ant brain is running a better algorithm than any we’ve (yet) been able to design into robots.

Here, my money is on option three. I see it as likely that, as we learn more about the operation of biological brains, we’ll discover algorithms which we can then use in robots and other machines.

Even if it turns out that large amounts of computing power are required, we shouldn’t forget the option that an AI can run “in the cloud” – taking advantage of many thousands of PCs running in parallel – much the same as modern malware, which can take advantage of thousands of so-called “infected zombie PCs”.

I am still not convinced that just because a computer is very powerful and has a great algorithm is really that intelligent. Sure it can learn but can it create?

Well, computers have already been involved in creating music, or in creating new proofs of parts of mathematics. Any shortcoming in creativity is likely to be explained, in my view, by option 3 above, rather than either option 1 or 2. As algorithms improve, and improvements occur in the speed and scale of the hardware that run these algorithms, the risk increases of an intelligence “explosion”.