I’ve changed my mind about consciousness.

I used to think that, of the two great problems about artificial minds – namely, achieving artificial general intelligence, and achieving artificial consciousness – progress toward the former would be faster than progress toward the latter.

After all, progress in understanding consciousness had seemed particularly slow, whereas enormous numbers of researchers in both academia and industry have been attaining breakthrough after breakthrough with new algorithms in artificial reasoning.

Over the decades, I’d read a number of books by Daniel Dennett and other philosophers who claimed to have shown that consciousness was basically already understood. There’s nothing spectacularly magical or esoteric about consciousness, Dennett maintained. What’s more, we must beware being misled by our own introspective understanding of our consciousness. That inner introspection is subject to distortions – perceptual illusions, akin to the visual illusions that often mislead us about what we think our eyes are seeing.

But I’d found myself at best semi-convinced by such accounts. I felt that, despite the clever analyses in such accounts, there was surely more to the story.

The most famous expression of the idea that consciousness still defied a proper understanding is the formulation by David Chalmers. This is from his watershed 1995 essay “Facing Up to the Problem of Consciousness”:

The really hard problem of consciousness is the problem of experience. When we think and perceive, there is a whir of information-processing, but there is also a subjective aspect… There is something it is like to be a conscious organism. This subjective aspect is experience.

When we see, for example, we experience visual sensations: the felt quality of redness, the experience of dark and light, the quality of depth in a visual field. Other experiences go along with perception in different modalities: the sound of a clarinet, the smell of mothballs. Then there are bodily sensations, from pains to orgasms; mental images that are conjured up internally; the felt quality of emotion, and the experience of a stream of conscious thought. What unites all of these states is that there is something it is like to be in them. All of them are states of experience.

It is undeniable that some organisms are subjects of experience. But the question of how it is that these systems are subjects of experience is perplexing. Why is it that when our cognitive systems engage in visual and auditory information-processing, we have visual or auditory experience: the quality of deep blue, the sensation of middle C? How can we explain why there is something it is like to entertain a mental image, or to experience an emotion?

It is widely agreed that experience arises from a physical basis, but we have no good explanation of why and how it so arises. Why should physical processing give rise to a rich inner life at all? It seems objectively unreasonable that it should, and yet it does.

However, as Wikipedia notes,

The existence of a “hard problem” is controversial. It has been accepted by philosophers of mind such as Joseph Levine, Colin McGinn, and Ned Block and cognitive neuroscientists such as Francisco Varela, Giulio Tononi, and Christof Koch. However, its existence is disputed by philosophers of mind such as Daniel Dennett, Massimo Pigliucci, Thomas Metzinger, Patricia Churchland, and Keith Frankish, and cognitive neuroscientists such as Stanislas Dehaene, Bernard Baars, Anil Seth and Antonio Damasio.

With so many smart people apparently unable to agree, what hope is there for a layperson to have any confidence in an answering the question, is consciousness already explained in principle, or do we need some fundamentally new insights?

It’s tempting to say, therefore, that the question should be left to one side. Instead of squandering energy spinning circles of ideas with little prospect of real progress, it would be better to concentrate on numerous practical questions: vaccines for pandemics, climate change, taking the sting out of psychological malware, protecting democracy against latent totalitarianism, and so on.

That practical orientation is the one that I have tried to follow most of the time. But there are four reasons, nevertheless, to keep returning to the question of understanding consciousness. A better understanding of consciousness might:

- Help provide therapists and counsellors with new methods to address the growing crisis of mental ill-health

- Change our attitudes towards the suffering we inflict, as a society, upon farm animals, fish, and other creatures

- Provide confidence on whether copying of memories and other patterns of brain activity, into some kind of silicon storage, could result at some future date in the resurrection of our consciousness – or whether any such reanimation would, instead, be “only a copy” of us

- Guide the ways in which systems of artificial intelligence are being created.

On that last point, consider the question whether AI systems will somehow automatically become conscious, as they gain in computational ability. Most AI researchers have been sceptical on that score. Google Maps is not conscious, despite all the profoundly clever things that it can do. Neither is your smartphone. As for the Internet as a whole, opinions are a bit more mixed, but again, the general consensus is that all the electronic processing happening on the Internet is devoid of the kind of subjective inner experience described by David Chalmers.

Yes, lots of software has elements of being self-aware. Such software contains models of itself. But it’s generally thought (and I agree, for what it’s worth) that such internal modelling is far short of subjective inner experience.

One prospect this raises is the dark possibility that humans might be superseded by AIs that are considerably more intelligent than us, but that such AIs would have “no-one at home”, that is, no inner consciousness. In that case, a universe with AIs instead of humans might have much more information processing, but be devoid of conscious feelings. Mega oops.

The discussion at this point is sometimes led astray by the popular notion that any threat from superintelligent AIs to human existence is predicated on these AIs “waking up” or become conscious. In that popular narrative, any such waking up might give an AI an additional incentive to preserve itself. Such an AI might adopt destructive human “alpha male” combative attitudes. But as I say, that’s a faulty line of reasoning. AIs might well be motivated to preserve themselves without ever gaining any consciousness. (Look up the concept of “basic AI drives” by Steve Omohundro.) Indeed, a cruise missile that locks onto a target poses a threat to that target, not because the missile is somehow conscious, but because it has enough intelligence to navigate to its target and explode on arrival.

Indeed, AIs can pose threats to people’s employment, without these AIs gaining consciousness. They can simulate emotions without having real internal emotions. They can create artistic masterpieces, using techniques such as GANs (Generative Adversarial Networks), without having any real psychological appreciation of the beauty of these works of art.

For these reasons, I’ve generally urged people to set aside the question of machine consciousness, and to focus instead on the question of machine intelligence. (For example, I presented that argument in Chapter 9 of my book Sustainable Superabundance.) The latter is tangible and poses increasing threats (and opportunities), whereas the former is a discussion that never seems to get off the ground.

But, as I mentioned at the start, I’ve changed my mind. I now think it’s possible we could have machines with synthetic consciousness well before we have machines with general intelligence.

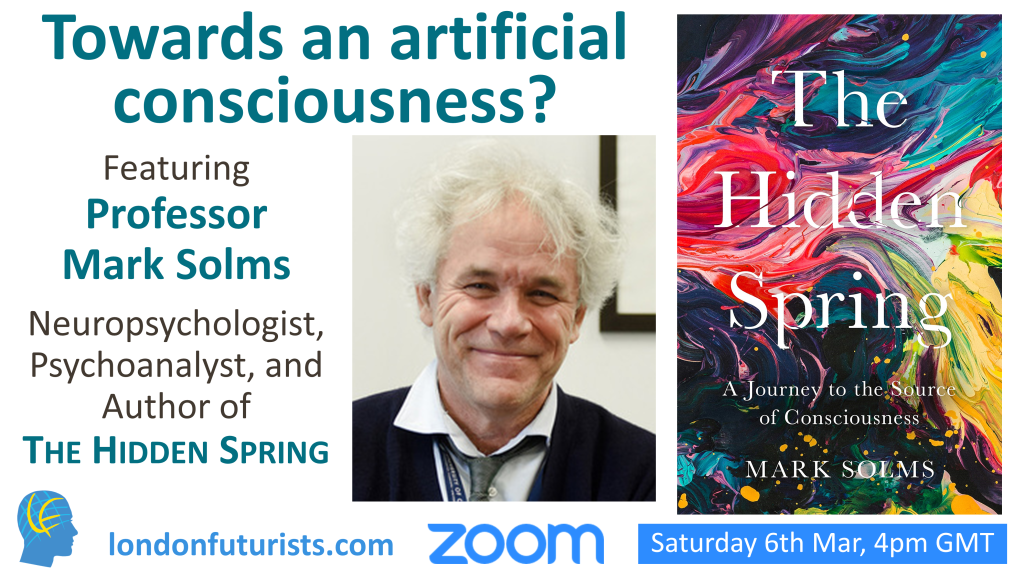

What’s changed my mind is the book by Professor Mark Solms, The Hidden Spring: A Journey to the Source of Consciousness.

Solms is director of neuropsychology in the Neuroscience Institute of the University of Cape Town, honorary lecturer in neurosurgery at the Royal London Hospital School of Medicine, and an honorary fellow of the American College of Psychiatrists. He has spent his entire career investigating the mysteries of consciousness. He achieved renown within his profession for identifying the brain mechanisms of dreaming and for bringing psychoanalytic insights into modern neuroscience. And now his book The Hidden Spring is bringing him renown far beyond his profession. Here’s a selection of the praise it has received:

- A remarkably bold fusion of ideas from psychoanalysis, psychology, and the frontiers of theoretical neuroscience, that takes aim at the biggest question there is. Solms will challenge your most basic beliefs.

– Matthew Cobb, author of The Idea of the Brain: The Past and Future of Neuroscience - At last the emperor has found some clothes! For decades, consciousness has been perceived as an epiphenomenon, little more than an illusion that can’t really make things happen. Solms takes a thrilling new approach to the problem, grounded in modern neurobiology but finding meaning in older ideas going back to Freud. This is an exciting book.

– Nick Lane, author of The Vital Question - To say this work is encyclopaedic is to diminish its poetic, psychological and theoretical achievement. This is required reading.

– Susie Orbach, author of In Therapy - Intriguing…There is plenty to provoke and fascinate along the way.

– Anil Seth, Times Higher Education - Solms’s efforts… have been truly pioneering. This unification is clearly the direction for the future.

– Eric Kandel, Nobel laureate for Physiology and Medicine - This treatment of consciousness and artificial sentience should be taken very seriously.

– Karl Friston, scientific director, Wellcome Trust Centre for Neuroimaging - Solms’s vital work has never ignored the lived, felt experience of human beings. His ideas look a lot like the future to me.

– Siri Hustvedt, author of The Blazing World - Nobody bewitched by these mysteries [of consciousness] can afford to ignore the solution proposed by Mark Solms… Fascinating, wide-ranging and heartfelt.

– Oliver Burkeman, Guardian - This is truly a remarkable book. It changes everything.

– Brian Eno

At times, I had to concentrate hard while listening to this book, rewinding the playback multiple times. That’s because the ideas kept sparking new lines of thought in my mind, which ran off in different directions as the narration continued. And although Solms explains his ideas in an engaging manner, I wanted to think through the deeper connections with the various fields that form part of the discussion – including psychoanalysis (Freud features heavily), thermodynamics (Helmholtz, Gibbs, and Friston), evolution, animal instincts, dreams, Bayesian statistics, perceptual illusions, and the philosophy of science.

Alongside the theoretical sections, the book contains plenty of case studies – from Solms’ own patients, and from other clinicians over the decades (actually centuries) – that illuminate the points being made. These studies involve people – or animals – with damage to parts of their brains. The unusual ways in which these subjects behave – and the unusual ways in which they express themselves – provide insight on how consciousness operates. Particularly remarkable are the children born with hydranencephaly – that is, without a cerebral cortex – but who nevertheless appear to experience feelings.

Having spent two weeks making my way through the first three quarters of the book, I took the time yesterday (Sunday) to listen to the final quarter, where there were several climaxes following on top of each other – addressing at length the “Hard Problem” ideas of David Chalmers, and the possibility of artificial consciousness.

It’s challenging to summarise such a rich set of ideas in just a few paragraphs, but here are some components:

- To understand consciousness, the subcortical brain stem (an ancient part of our anatomy) is at least as important as the cognitive architecture of the cortex

- To understand consciousness, we need to pay attention to feelings as much as to memories and thought processing

- Likewise, the chemistry of long-range neuromodulators is at least as important as the chemistry of short-range neurotransmitters

- Consciousness arises from particular kinds of homeostatic systems which are separated from their environment by a partially permeable boundary: a structure known as a “Markov blanket”

- These systems need to take actions to preserve their own existence, including creating an internal model of their external environment, monitoring differences between incoming sensory signals and what their model predicted these signals would be, and making adjustments so as to prevent these differences from escalating

- Whereas a great deal of internal processing and decision-making can happen automatically, without conscious thought, some challenges transcend previous programming, and demand greater attention

In short, consciousness arises from particular forms of information processing. (Solms provides good reasons to reject the idea that there is a basic consiciousness latent in all information, or, indeed, in all matter.) Whilst more work requires to be done to pin down the exact circumstances in which consciousness arises, this project is looking much more promising now, than it did just a few years ago.

This is no idle metaphysics. The ideas can in principle be tested by creating artificial systems that involve particular kinds of Markov blankets, uncertain environments that pose existential threats to the system, diverse categorical needs (akin to the multiple different needs of biologically conscious organisms), and layered feedback loops. Solms sets out a three-stage process whereby such systems could be built and evolved, in a relatively short number of years.

But wait. All kinds of questions arise. Perhaps the most pressing one is this: If such systems can be built, should we build them?

That “should we” question gets a lot of attention in the closing sections of the book. We might end up with AIs that are conscious slaves, in ways that we don’t have to worry about for our existing AIs. We might create AIs that feel pain beyond that which any previous conscious being has ever experienced it. Equally, we might create AIs that behave very differently from those without consciousness – AIs that are more unpredictable, more adaptable, more resourceful, more creative – and more dangerous.

Solms is doubtful about any global moratorium on such experiments. Now that the ideas are out of the bag, so to speak, there will be many people – in both academia and industry – who are motivated to do additional research in this field.

What next? That’s a question that I’ll be exploring this Saturday, 6th March, when Mark Solms will be speaking to London Futurists. The title of his presentation will be “Towards an artificial consciousness”.

For more details of what I expect will be a fascinating conversation – and to register to take part in the live question and answer portion of the event – follow the links here.